Charles Schwab

We empower you today, so you can own your tomorrow.

Every day at Schwab is another opportunity to inspire innovative financial solutions that challenge the status quo. Your work is going to make a difference—for our clients, our communities, and in your future. That’s what being at Schwab is all about.

Find your team.

Financial Services Representative

Financial Services Representative

Your day is filled with helping others and your future is packed with potential. That’s what makes our customer service roles so unique. Whether you’re licensed or ready to get one through paid training, Schwab is the place to make a bigger impact.

Search all jobs

Financial Consultants & Series 7/63 Licensed Professionals

Financial Consultants & Series 7/63 Licensed Professionals

It’s the freedom to build client relationships, build trust, and build success. We're a team in which everyone can come together to create powerful change. Take control of your future at Schwab.

Search all jobs

Technology, Engineering, and Software Development

Technology, Engineering, and Software Development

Fuel innovation that touches millions of lives. We’re collaborating on cutting-edge technologies that lead our industry. And you’ll have the support of Schwab behind you every step of the way.

Search all jobs

"Schwab supported me throughout my licensing journey … the knowledge I gained helped me pass my exams." Jessica M. Client Relationship Specialist

"I owe it to my mentors and role models. Several managers and directors really took me under their wing." Miranda B. Specialist in Trader Services

"During my last 15 years in the military, I took care of others … that experience has translated well to working at Schwab, where we focus on taking care of our clients." Audrey W. Compliance Manager

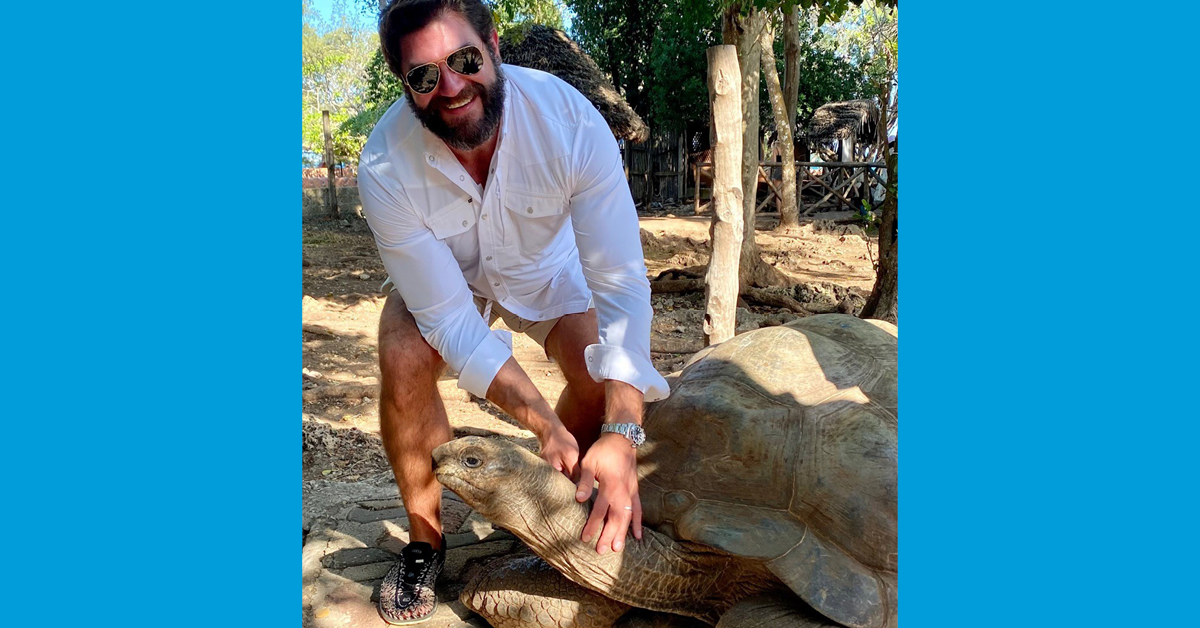

"My sabbatical came at the perfect time, as it allowed me to disconnect for a while and recharge. Plus, I was able to have amazing experiences with my family and travel to incredible places." Matt B. VP, Branch Manager

Career changers.

You don’t have to have a background in finance to have an amazing future with Schwab. So bring your passion for learning and your desire to make a difference. With our training, support, and our investment in your growth, you’ll find a new community and new kind of impact.

Our culture.

This is a tight-knit team. A place that’s collaborative and empowering. When you join, your fellow Schwabbies have your back and really want to help you reach your goals—together.

Benefits, rewards, and development.

We’re focused on you and the things you need to create a life and career that’s bold and balanced—whether it’s benefits, recognition, sabbaticals, opportunities to learn and grow, or amazing internal mobility.

Standing out from the crowd.

-

Learn more March 27, 2023

Learn more March 27, 2023 -

Students We’re all-in when it comes to developing talent – from internships and academies to our tech leader program. Learn more April 12, 2023

Students We’re all-in when it comes to developing talent – from internships and academies to our tech leader program. Learn more April 12, 2023 -

Diversity and inclusion We make sure everyone feels valued, supported, and welcomed. Because that’s what moves us forward. Learn more April 12, 2023

Diversity and inclusion We make sure everyone feels valued, supported, and welcomed. Because that’s what moves us forward. Learn more April 12, 2023 -

Schwab stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more April 12, 2023

Schwab stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more April 12, 2023 -

Learn more March 23, 2023

Learn more March 23, 2023 -

Schwab stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more April 13, 2023

Schwab stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more April 13, 2023 -

Learn more April 18, 2023

Learn more April 18, 2023 -

Learn more June 29, 2023

Learn more June 29, 2023 -

Learn more June 30, 2023

Learn more June 30, 2023 -

Learn more July 03, 2023

Learn more July 03, 2023 -

Learn more July 06, 2023

Learn more July 06, 2023 -

Students We’re all-in when it comes to developing talent – from internships and academies to our tech leader program. Learn more July 07, 2023

Students We’re all-in when it comes to developing talent – from internships and academies to our tech leader program. Learn more July 07, 2023 -

Learn more July 07, 2023

Learn more July 07, 2023 -

Learn more July 10, 2023

Learn more July 10, 2023 -

Learn more June 26, 2023

Learn more June 26, 2023 -

Diversity and inclusion We make sure everyone feels valued, supported, and welcomed. Because that’s what moves us forward. Learn more June 30, 2023

Diversity and inclusion We make sure everyone feels valued, supported, and welcomed. Because that’s what moves us forward. Learn more June 30, 2023 -

Learn more July 03, 2023

Learn more July 03, 2023 -

Learn more July 03, 2023

Learn more July 03, 2023 -

Learn more July 06, 2023

Learn more July 06, 2023 -

Schwab Stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more July 07, 2023

Schwab Stories There’s no better way to discover life at Schwab than hearing it from the amazing people who experience it every day. Learn more July 07, 2023 -

Learn more July 11, 2023

Learn more July 11, 2023 -

Learn more July 06, 2023

Learn more July 06, 2023 -

Learn more July 10, 2023

Learn more July 10, 2023 -

Learn more July 11, 2023

Learn more July 11, 2023 -

Learn more June 27, 2023

Learn more June 27, 2023 -

Learn more June 28, 2023

Learn more June 28, 2023 -

Learn more July 03, 2023

Learn more July 03, 2023 -

Client Service At Schwab, our commitment to seeing the world “through clients’ eyes” underlines everything we do, and our Client Service & Support team is often the first place client's experience it. Learn more July 07, 2023 Service Related Content

Client Service At Schwab, our commitment to seeing the world “through clients’ eyes” underlines everything we do, and our Client Service & Support team is often the first place client's experience it. Learn more July 07, 2023 Service Related Content -

Learn more July 07, 2023

Learn more July 07, 2023 -

Learn more July 07, 2023

Learn more July 07, 2023 -

6 Tips for Career Success as a College Graduate: Advice from a Schwab Campus Recruiter Graduating from college is a momentous occasion and it can be hard knowing where to start after earning your degree. As you're looking for your next opportunity, make sure to check out these helpful tips and words of advice from a Schwab Campus Recruiter to set yourself up for career success! Learn more June 21, 2023 Interns & Early Careers Story Hub

6 Tips for Career Success as a College Graduate: Advice from a Schwab Campus Recruiter Graduating from college is a momentous occasion and it can be hard knowing where to start after earning your degree. As you're looking for your next opportunity, make sure to check out these helpful tips and words of advice from a Schwab Campus Recruiter to set yourself up for career success! Learn more June 21, 2023 Interns & Early Careers Story Hub -

Benefits Spotlight: Matt B.'s Adventures While on Sabbatical Read how Matt B., a V.P. Branch Manager, used Schwab's Sabbatical Benefit to explore Africa with his family. Learn more June 21, 2023 Benefits Story Hub Home Page Related Content

Benefits Spotlight: Matt B.'s Adventures While on Sabbatical Read how Matt B., a V.P. Branch Manager, used Schwab's Sabbatical Benefit to explore Africa with his family. Learn more June 21, 2023 Benefits Story Hub Home Page Related Content -

Career Development: Les S. Builds a Diverse Career at Schwab Read how Les S. in Digital Product Management reinvented her career path to support innovation at Schwab. Learn more June 21, 2023 Career Development Story Hub

Career Development: Les S. Builds a Diverse Career at Schwab Read how Les S. in Digital Product Management reinvented her career path to support innovation at Schwab. Learn more June 21, 2023 Career Development Story Hub -

Women in Finance: Mayra C.'s Path to Leadership Learn how Mayra C., a VP Financial Consultant, built an impactful career as a woman in finance. Learn more June 21, 2023

Women in Finance: Mayra C.'s Path to Leadership Learn how Mayra C., a VP Financial Consultant, built an impactful career as a woman in finance. Learn more June 21, 2023 -

Brandon's Commitment to Service | Schwab Jobs Discover how Brandon is transforming the lives of others through passionate customer service & volunteerism. Learn more June 21, 2023 Culture & Inclusion

Brandon's Commitment to Service | Schwab Jobs Discover how Brandon is transforming the lives of others through passionate customer service & volunteerism. Learn more June 21, 2023 Culture & Inclusion -

Reach for the S.T.A.R.s: How to Prepare for a Behavioral-Based Interview at Schwab Interviewing is a necessary and essential part of the job application process. Here at Schwab, our interviewing approach stems from a behavioral-based interviewing methodology. Read on for advice and further information on the best way to prepare for an interview at Schwab! Learn more June 21, 2023 Career Development Story Hub

Reach for the S.T.A.R.s: How to Prepare for a Behavioral-Based Interview at Schwab Interviewing is a necessary and essential part of the job application process. Here at Schwab, our interviewing approach stems from a behavioral-based interviewing methodology. Read on for advice and further information on the best way to prepare for an interview at Schwab! Learn more June 21, 2023 Career Development Story Hub -

Hiring Our Heroes: Audrey, Veteran & Compliance Manager Read how Veteran Audrey W. found a Compliance Manager opportunity at Schwab through our Hiring Our Heroes partnership Learn more June 21, 2023 Culture & Inclusion Home Page Related Content

Hiring Our Heroes: Audrey, Veteran & Compliance Manager Read how Veteran Audrey W. found a Compliance Manager opportunity at Schwab through our Hiring Our Heroes partnership Learn more June 21, 2023 Culture & Inclusion Home Page Related Content -

Building a Career at Schwab: Travis's Story Read how Travis uncovered his career opportunity and gained professional development in Wealth Management as a Financial Consultant at Charles Schwab. Learn more July 20, 2023 Career Development

Building a Career at Schwab: Travis's Story Read how Travis uncovered his career opportunity and gained professional development in Wealth Management as a Financial Consultant at Charles Schwab. Learn more July 20, 2023 Career Development -

Intern to Schwabbie, Dillon's Story Celebrating National Intern Day: Learn how Dillon went from Intern Academy to full time employee to Employee Resource Group (ERG) creator & leader. Learn more July 27, 2023 Interns & Early Careers Story Hub

Intern to Schwabbie, Dillon's Story Celebrating National Intern Day: Learn how Dillon went from Intern Academy to full time employee to Employee Resource Group (ERG) creator & leader. Learn more July 27, 2023 Interns & Early Careers Story Hub -

Military Veterans Network: Tammy's Story Learn how Tammy supports veterans through our employee resource group Military Veterans Network at Schwab. Learn more July 28, 2023 Culture & Inclusion Story Hub

Military Veterans Network: Tammy's Story Learn how Tammy supports veterans through our employee resource group Military Veterans Network at Schwab. Learn more July 28, 2023 Culture & Inclusion Story Hub -

ERG Spotlight: Pride+ Happy PRIDE month! Learn about our LGBTQ+ Employee Resource Group at Schwab PRIDE+. Learn more July 31, 2023 Culture & Inclusion Story Hub

ERG Spotlight: Pride+ Happy PRIDE month! Learn about our LGBTQ+ Employee Resource Group at Schwab PRIDE+. Learn more July 31, 2023 Culture & Inclusion Story Hub -

Veteran Finds Work-Life Balance and Community at Schwab Read how military veteran Jordan H. found work/life balance and became a leader in the Families Network at Schwab. Learn more August 01, 2023 Culture & Inclusion Story Hub

Veteran Finds Work-Life Balance and Community at Schwab Read how military veteran Jordan H. found work/life balance and became a leader in the Families Network at Schwab. Learn more August 01, 2023 Culture & Inclusion Story Hub -

Jackie's FINRA Series 7 Exam Journey Read how passing the Series 7 exam transformed Jackie's career trajectory, and how the support she encountered at Schwab helped her become a Financial Consultant Partner at our firm. Learn more August 10, 2023 Career Development Story Hub Retail Related Content

Jackie's FINRA Series 7 Exam Journey Read how passing the Series 7 exam transformed Jackie's career trajectory, and how the support she encountered at Schwab helped her become a Financial Consultant Partner at our firm. Learn more August 10, 2023 Career Development Story Hub Retail Related Content -

Learn more August 16, 2023

Learn more August 16, 2023 -

Learn more August 10, 2023

Learn more August 10, 2023 -

ERG Spotlight: Schwab Organization of Latinx (SOL) Read how our Schwab Organization of Latinx (SOL) Employee Resource Group empowers Latinx individuals to succeed in their professional careers. Learn more September 13, 2023 Culture & Inclusion Story Hub

ERG Spotlight: Schwab Organization of Latinx (SOL) Read how our Schwab Organization of Latinx (SOL) Employee Resource Group empowers Latinx individuals to succeed in their professional careers. Learn more September 13, 2023 Culture & Inclusion Story Hub -

Career Development: How Leslie R. Grew Professionally & Forged Connections Through Shared Culture Read how Leslie R. experienced career development at Schwab while using her cultural background to make finance accessible to non-English speaking clients. Learn more September 21, 2023 Career Development Story Hub

Career Development: How Leslie R. Grew Professionally & Forged Connections Through Shared Culture Read how Leslie R. experienced career development at Schwab while using her cultural background to make finance accessible to non-English speaking clients. Learn more September 21, 2023 Career Development Story Hub -

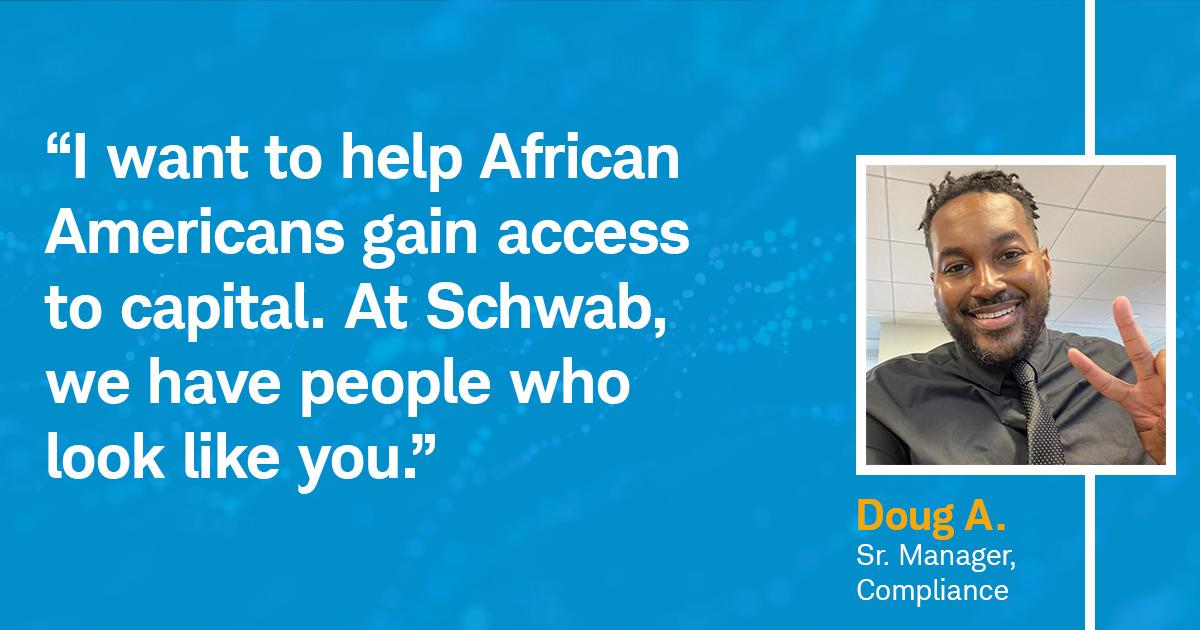

Driving Change Through Diverse Leadership Schwabbie Doug A. was nominated by our Black Professionals at Charles Schwab (BPACS) Employee Resource Group to be a part of the IMPACT Development Program. Read how the program inspired him to “help African Americans gain access to capital…build community, and move our D&I strategy forward.” Learn more September 25, 2023 Culture & Inclusion Story Hub

Driving Change Through Diverse Leadership Schwabbie Doug A. was nominated by our Black Professionals at Charles Schwab (BPACS) Employee Resource Group to be a part of the IMPACT Development Program. Read how the program inspired him to “help African Americans gain access to capital…build community, and move our D&I strategy forward.” Learn more September 25, 2023 Culture & Inclusion Story Hub -

Women in Tech: Olivia V. Meet Olivia V., one of our Technology Product Management superstars. Read how, over the course of 13 years at Schwab, she’s transitioned from finance into tech and built an impactful career. Learn more September 29, 2023 Career Development Story Hub Home Page Related Content Technology Related Content

Women in Tech: Olivia V. Meet Olivia V., one of our Technology Product Management superstars. Read how, over the course of 13 years at Schwab, she’s transitioned from finance into tech and built an impactful career. Learn more September 29, 2023 Career Development Story Hub Home Page Related Content Technology Related Content -

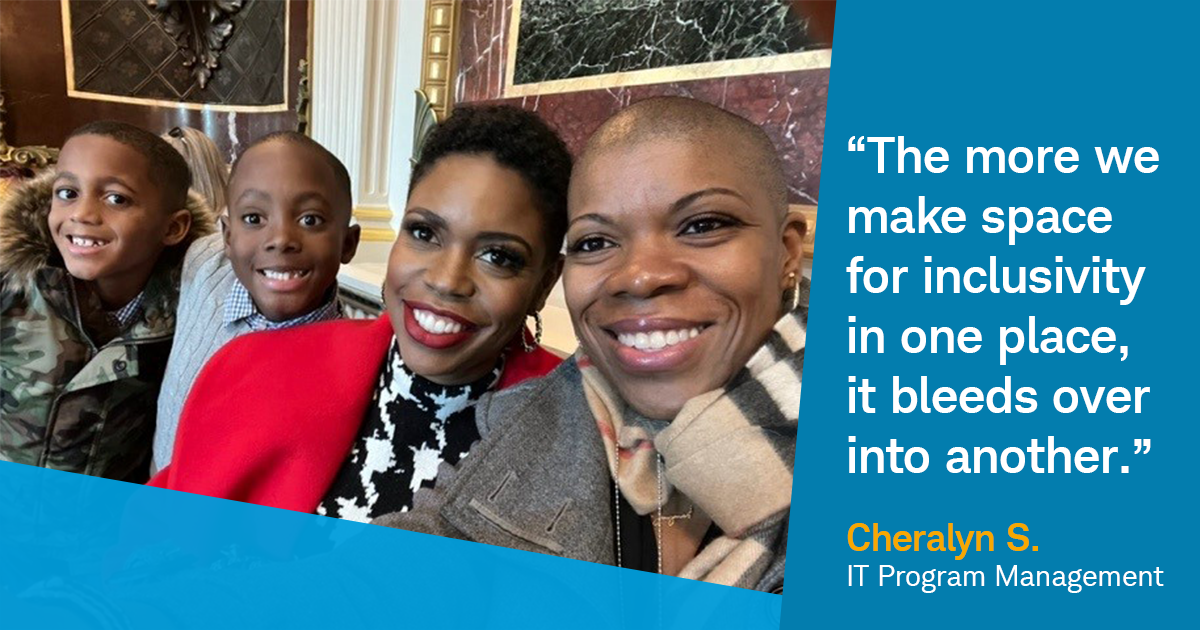

Allyship Matters: Finding Success and Authenticity at Home and at Work In recognition of National Coming Out Day, we’re highlighting Cheralyn S., a leader in IT Program Management and member of the LGBTQ+ community. Read about her coming-out journey and learn how she found allyship and authenticity in the workplace and beyond. Learn more October 11, 2023 Culture & Inclusion Story Hub

Allyship Matters: Finding Success and Authenticity at Home and at Work In recognition of National Coming Out Day, we’re highlighting Cheralyn S., a leader in IT Program Management and member of the LGBTQ+ community. Read about her coming-out journey and learn how she found allyship and authenticity in the workplace and beyond. Learn more October 11, 2023 Culture & Inclusion Story Hub -

Build Your Career: 30 Years at Schwab with Robert K., VP Branch Manager Learn how Robert K., a 30-year Schwabbie and VP Branch Manager, used his passion for investment to experience career growth, professional stability, and become a leader in finance. Learn more October 23, 2023 Career Development Story Hub Retail Related Content

Build Your Career: 30 Years at Schwab with Robert K., VP Branch Manager Learn how Robert K., a 30-year Schwabbie and VP Branch Manager, used his passion for investment to experience career growth, professional stability, and become a leader in finance. Learn more October 23, 2023 Career Development Story Hub Retail Related Content -

Career Changer: Academia to Financial Services Learn how Miranda used her degree in Machine Learning to transition from a scientific researcher to a brokerage specialist in Trader Services. Learn more November 06, 2023 Career Development Story Hub Home Page Related Content Service Related Content SR Sidebar Related Content

Career Changer: Academia to Financial Services Learn how Miranda used her degree in Machine Learning to transition from a scientific researcher to a brokerage specialist in Trader Services. Learn more November 06, 2023 Career Development Story Hub Home Page Related Content Service Related Content SR Sidebar Related Content -

Schwoomerangs: Returning to Schwab with Nichole S. Learn why Nichole S., a Director in Talent & Organizational Development and one of our "Schwoomerangs", returned to Schwab -- and how her work helps others thrive professionally. Learn more November 14, 2023 Life at Schwab Culture & Inclusion Story Hub

Schwoomerangs: Returning to Schwab with Nichole S. Learn why Nichole S., a Director in Talent & Organizational Development and one of our "Schwoomerangs", returned to Schwab -- and how her work helps others thrive professionally. Learn more November 14, 2023 Life at Schwab Culture & Inclusion Story Hub -

Season of Giving: Empowering Communities Through Volunteerism with Daniel R. Daniel, United States Marine Corps Veteran and Director, is playing a crucial part in fighting food insecurity for those in need. Learn about his journey from the military to Schwab, how he's creating a positive impact through volunteerism, and the importance of lending a helping hand. Learn more November 16, 2023 Career Development Culture & Inclusion Story Hub

Season of Giving: Empowering Communities Through Volunteerism with Daniel R. Daniel, United States Marine Corps Veteran and Director, is playing a crucial part in fighting food insecurity for those in need. Learn about his journey from the military to Schwab, how he's creating a positive impact through volunteerism, and the importance of lending a helping hand. Learn more November 16, 2023 Career Development Culture & Inclusion Story Hub -

Empathy Through Art: How the “Pathos Project” Unveils Our Unique Experiences Catch up with Wannie, one of the creators of The Pathos Project, and Lindsay, a featured artists, to understand how the accessibility of art strengthens our Schwab community and unites us under common understanding. Learn more November 17, 2023 Life at Schwab Culture & Inclusion Story Hub

Empathy Through Art: How the “Pathos Project” Unveils Our Unique Experiences Catch up with Wannie, one of the creators of The Pathos Project, and Lindsay, a featured artists, to understand how the accessibility of art strengthens our Schwab community and unites us under common understanding. Learn more November 17, 2023 Life at Schwab Culture & Inclusion Story Hub -

ERG Spotlight: Families at Schwab (FAMS) Learn about our Families at Schwab (FAMS) Employee Resource Group, where community, education, and networking opportunities are helping parents and guardians thrive. Learn more November 28, 2023 Life at Schwab Benefits Culture & Inclusion Story Hub

ERG Spotlight: Families at Schwab (FAMS) Learn about our Families at Schwab (FAMS) Employee Resource Group, where community, education, and networking opportunities are helping parents and guardians thrive. Learn more November 28, 2023 Life at Schwab Benefits Culture & Inclusion Story Hub -

Schwoomerangs: Returning to Schwab with Jamie F. Read about how “Schwoomerang” Jamie, pursued career opportunities outside of our company and later returned. As a Manager in Talent Acquisition, she’s using her skills to attract top-tier talent and is happy to be with a company that prioritizes her professional growth. Learn more November 28, 2023 Benefits Career Development Culture & Inclusion Story Hub

Schwoomerangs: Returning to Schwab with Jamie F. Read about how “Schwoomerang” Jamie, pursued career opportunities outside of our company and later returned. As a Manager in Talent Acquisition, she’s using her skills to attract top-tier talent and is happy to be with a company that prioritizes her professional growth. Learn more November 28, 2023 Benefits Career Development Culture & Inclusion Story Hub -

Culture at Schwab: Creating Positivity with Chris W. Read how Specialist Chris W. spreads positivity and inspiration every day at Schwab. Learn more December 21, 2023 Life at Schwab Career Development Culture & Inclusion Story Hub

Culture at Schwab: Creating Positivity with Chris W. Read how Specialist Chris W. spreads positivity and inspiration every day at Schwab. Learn more December 21, 2023 Life at Schwab Career Development Culture & Inclusion Story Hub -

Schwoomerangs: Returning to Schwab with Maressa M. Read how Maressa M., a Manager in Event & Production Services, rediscovered our warm culture and career opportunities after returning to our team. Learn more January 04, 2024 Career Development Culture & Inclusion Story Hub

Schwoomerangs: Returning to Schwab with Maressa M. Read how Maressa M., a Manager in Event & Production Services, rediscovered our warm culture and career opportunities after returning to our team. Learn more January 04, 2024 Career Development Culture & Inclusion Story Hub -

A Journey of Ambition and Growth: 40 Years in Financial Services Read about Wanda's professional growth, desire to help others, and impactful career spanning 40 years at Schwab. Learn more January 31, 2024 Career Development Culture & Inclusion Story Hub Home Page Related Content

A Journey of Ambition and Growth: 40 Years in Financial Services Read about Wanda's professional growth, desire to help others, and impactful career spanning 40 years at Schwab. Learn more January 31, 2024 Career Development Culture & Inclusion Story Hub Home Page Related Content -

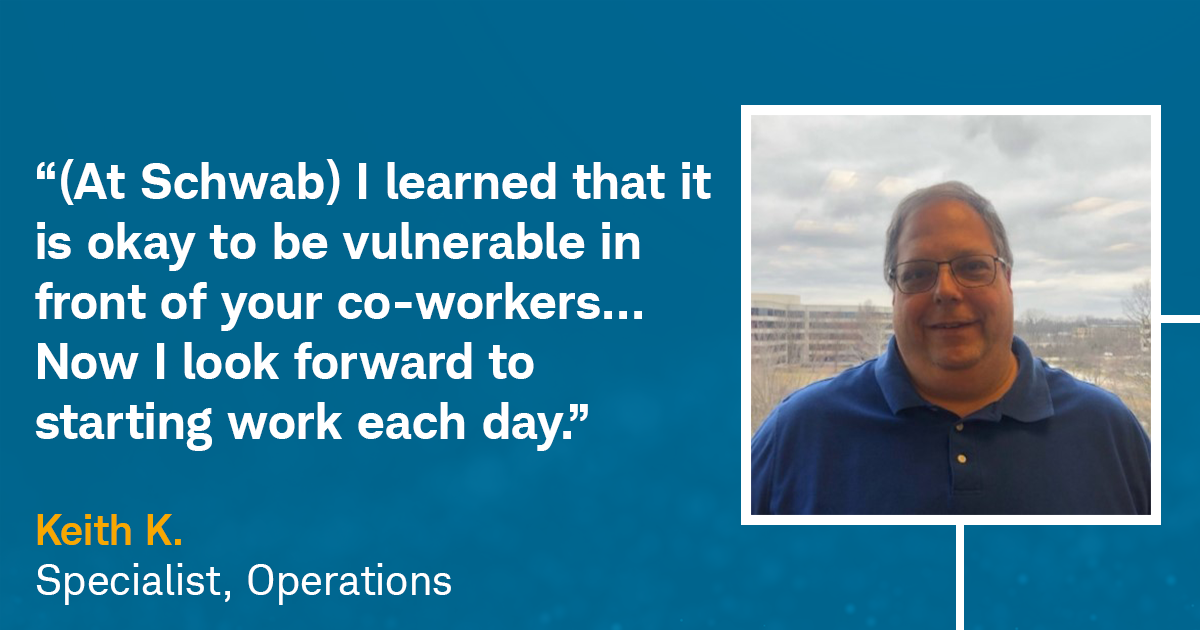

How Vulnerability Leads to Better Client Service Read how career changer Keith K. uses his empathy to serve clients - and how our caring culture empowered him to overcome obstacles, be his authentic self, and grow in his career. Learn more February 14, 2024 Career Development Culture & Inclusion Story Hub

How Vulnerability Leads to Better Client Service Read how career changer Keith K. uses his empathy to serve clients - and how our caring culture empowered him to overcome obstacles, be his authentic self, and grow in his career. Learn more February 14, 2024 Career Development Culture & Inclusion Story Hub -

Find Your Fit: 10 Essential Questions to Ask During Your Job Interview To help you along your job hunting journey, we put together “10 Essential Questions to Ask During Your Job Interview”. Read through to learn how to uncover key elements of company’s culture and see how our own Schwab Talent Advisors answer these important questions. Learn more February 21, 2024 Career Development Culture & Inclusion Story Hub Home Page Related Content

Find Your Fit: 10 Essential Questions to Ask During Your Job Interview To help you along your job hunting journey, we put together “10 Essential Questions to Ask During Your Job Interview”. Read through to learn how to uncover key elements of company’s culture and see how our own Schwab Talent Advisors answer these important questions. Learn more February 21, 2024 Career Development Culture & Inclusion Story Hub Home Page Related Content -

25 for 25: A Wellness Challenge | Schwab Jobs Charles Schwab Abilities Network (CSAN) and Military Veteran Network (MVN), two of our 11 Employee Resource Groups at Schwab, jointly presented a mindful challenge to Schwabbies entitled the 25 for 25 Challenge. Read on to find out the significance behind the number "25" and why this challenge was so impactful! Learn more June 21, 2023 Culture & Inclusion Story Hub

25 for 25: A Wellness Challenge | Schwab Jobs Charles Schwab Abilities Network (CSAN) and Military Veteran Network (MVN), two of our 11 Employee Resource Groups at Schwab, jointly presented a mindful challenge to Schwabbies entitled the 25 for 25 Challenge. Read on to find out the significance behind the number "25" and why this challenge was so impactful! Learn more June 21, 2023 Culture & Inclusion Story Hub -

NERDing Out: Schwab’s Associate Software Engineering Program What do you get when you combine on-the-job training, continuous learning & development opportunities, and the power to grow your skills & acumen in the tech space? You guessed it – the Associate Software Engineering program! Read on to learn why being a NERD is such a great experience for graduates in the tech space. Learn more June 21, 2023 Interns & Early Careers Story Hub

NERDing Out: Schwab’s Associate Software Engineering Program What do you get when you combine on-the-job training, continuous learning & development opportunities, and the power to grow your skills & acumen in the tech space? You guessed it – the Associate Software Engineering program! Read on to learn why being a NERD is such a great experience for graduates in the tech space. Learn more June 21, 2023 Interns & Early Careers Story Hub -

How to Start Networking & Make Connections Read on for networking advice from a Schwab Sourcing advisor on why it’s important and how to make meaningful connections that will help you find job opportunities and advance your career! Learn more June 21, 2023 Career Development Story Hub

How to Start Networking & Make Connections Read on for networking advice from a Schwab Sourcing advisor on why it’s important and how to make meaningful connections that will help you find job opportunities and advance your career! Learn more June 21, 2023 Career Development Story Hub -

Careers in Tech: Personalized Investing Engineering Read how Motif & Schwab combined forces to disrupt the finance industry with innovative technology and personalized investing engineering. Learn more June 21, 2023 Life at Schwab Story Hub Technology Related Content

Careers in Tech: Personalized Investing Engineering Read how Motif & Schwab combined forces to disrupt the finance industry with innovative technology and personalized investing engineering. Learn more June 21, 2023 Life at Schwab Story Hub Technology Related Content -

Service at Schwab: Finding Fulfillment in Your Career Through Serving Clients Are you someone who genuinely enjoys helping others? Do you value improving yourself professionally and the opportunity to grow your skills all while receiving support from leadership? If this resonates with you, a career in Service may be the perfect place to start your career journey! Learn more June 21, 2023 Life at Schwab Story Hub

Service at Schwab: Finding Fulfillment in Your Career Through Serving Clients Are you someone who genuinely enjoys helping others? Do you value improving yourself professionally and the opportunity to grow your skills all while receiving support from leadership? If this resonates with you, a career in Service may be the perfect place to start your career journey! Learn more June 21, 2023 Life at Schwab Story Hub -

Dorian’s Journey from an HBCU to Charles Schwab | Schwab Jobs Explore what it's like to transition from college to corporate America - Learn about Dorian's journey from a Historically Black College & University (HBCU) to the finance world with Schwab. Learn more June 21, 2023 Life at Schwab Story Hub

Dorian’s Journey from an HBCU to Charles Schwab | Schwab Jobs Explore what it's like to transition from college to corporate America - Learn about Dorian's journey from a Historically Black College & University (HBCU) to the finance world with Schwab. Learn more June 21, 2023 Life at Schwab Story Hub -

Supporting First-Gen College Students: Rene's Story Schwab helps first generation college students like Rene become a Financial Consultant with employee resource groups, higher education and tuition reimbursement. Learn more June 21, 2023 Interns & Early Careers Story Hub

Supporting First-Gen College Students: Rene's Story Schwab helps first generation college students like Rene become a Financial Consultant with employee resource groups, higher education and tuition reimbursement. Learn more June 21, 2023 Interns & Early Careers Story Hub -

How to Turn Your Internship Into a Full-Time Career Learn tips on how to put yourself in the best position for successfully receiving a full-time job offer from your internship. Learn more June 21, 2023 Life at Schwab Story Hub

How to Turn Your Internship Into a Full-Time Career Learn tips on how to put yourself in the best position for successfully receiving a full-time job offer from your internship. Learn more June 21, 2023 Life at Schwab Story Hub -

Intern to Financial Consultant: Catherine & Isabel’s Journey Through the FCDA Program The Financial Consultant Development Academy at TD Ameritrade is an opportunity for college graduates to make a difference in the lives of clients by helping them achieve their financial goals. Read the article to learn more about how you can make an impact in your financial services career! Learn more June 21, 2023 Interns & Early Careers Story Hub

Intern to Financial Consultant: Catherine & Isabel’s Journey Through the FCDA Program The Financial Consultant Development Academy at TD Ameritrade is an opportunity for college graduates to make a difference in the lives of clients by helping them achieve their financial goals. Read the article to learn more about how you can make an impact in your financial services career! Learn more June 21, 2023 Interns & Early Careers Story Hub -

Schwab's Intern Alumni: From Intern to Talent Acquisition Manager Learn how this Schwabbie turned her internship and passion for human resources into a full-time Talent Acquisition Management role. Learn more June 21, 2023 Life at Schwab Story Hub

Schwab's Intern Alumni: From Intern to Talent Acquisition Manager Learn how this Schwabbie turned her internship and passion for human resources into a full-time Talent Acquisition Management role. Learn more June 21, 2023 Life at Schwab Story Hub -

An Empathetic Approach to Finance Learn how our Client Relationship Specialists help clients with their personal financial goals including wills, trusts, and estate plans all while taking an empathetic approach to finance. Learn more June 21, 2023 Life at Schwab Story Hub

An Empathetic Approach to Finance Learn how our Client Relationship Specialists help clients with their personal financial goals including wills, trusts, and estate plans all while taking an empathetic approach to finance. Learn more June 21, 2023 Life at Schwab Story Hub -

Schwab’s Intern Alumni: From Intern to Product Manager Learn how this Schwabbie turned an internship into a full-time Product Management role while learning data analytics programs such as SQL, Python, and Tableau. Learn more June 21, 2023 Interns & Early Careers Story Hub

Schwab’s Intern Alumni: From Intern to Product Manager Learn how this Schwabbie turned an internship into a full-time Product Management role while learning data analytics programs such as SQL, Python, and Tableau. Learn more June 21, 2023 Interns & Early Careers Story Hub -

Systems Engineer and Paralympian Tony V.'s Journey | Schwab Jobs Learn how Sr. Systems Engineer and former Paralympic Gold Medalist Tony V. found a support at Schwab. Learn more June 21, 2023 Life at Schwab Story Hub

Systems Engineer and Paralympian Tony V.'s Journey | Schwab Jobs Learn how Sr. Systems Engineer and former Paralympic Gold Medalist Tony V. found a support at Schwab. Learn more June 21, 2023 Life at Schwab Story Hub -

What It’s Like Being a Software Developer Engineer | Schwab Jobs From HBCU to Schwab Technology: Amari’s Career as a Software Developer Engineer. Read about Amari's journey from his college campus to working in financial technology at Schwab! Learn more June 21, 2023 Life at Schwab Story Hub

What It’s Like Being a Software Developer Engineer | Schwab Jobs From HBCU to Schwab Technology: Amari’s Career as a Software Developer Engineer. Read about Amari's journey from his college campus to working in financial technology at Schwab! Learn more June 21, 2023 Life at Schwab Story Hub -

Women in Tech: An Interview With Kristin, Director of Schwab's NERD Program Learn more about Schwab's NERD program and Kristin's career journey in technology. Learn more June 21, 2023 Culture & Inclusion Story Hub

Women in Tech: An Interview With Kristin, Director of Schwab's NERD Program Learn more about Schwab's NERD program and Kristin's career journey in technology. Learn more June 21, 2023 Culture & Inclusion Story Hub -

How to Advocate for Women's Rights in the Workplace Find out how Schwab strives to be one of the best workplaces for women in business & finance through promoting gender equality & women's rights. Learn more June 21, 2023 Culture & Inclusion Story Hub

How to Advocate for Women's Rights in the Workplace Find out how Schwab strives to be one of the best workplaces for women in business & finance through promoting gender equality & women's rights. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Celebrating Over 20 Years at Schwab: Tim’s Commitment to Service As the end of April is approaching, so is Tim R.’s length of service at Schwab. From his time in the military to his tenure at Schwab, Tim’s commitment to his clients is a great reminder of the foundation that Schwab was built on – service is at the heart of everything we do. Learn more June 21, 2023 Life at Schwab Story Hub

Celebrating Over 20 Years at Schwab: Tim’s Commitment to Service As the end of April is approaching, so is Tim R.’s length of service at Schwab. From his time in the military to his tenure at Schwab, Tim’s commitment to his clients is a great reminder of the foundation that Schwab was built on – service is at the heart of everything we do. Learn more June 21, 2023 Life at Schwab Story Hub -

ERG Overview: How GLOBE Fosters Belonging Learn more about Schwab's employee resource group, GLOBE, and how Schwab celebrates cultural differences. Learn more June 21, 2023 Culture & Inclusion Story Hub

ERG Overview: How GLOBE Fosters Belonging Learn more about Schwab's employee resource group, GLOBE, and how Schwab celebrates cultural differences. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Our Culture of Appreciation We appreciate our Schwabbies each and every day! Read how appreciation is part of Schwab's culture. Learn more June 21, 2023 Culture & Inclusion Story Hub

Our Culture of Appreciation We appreciate our Schwabbies each and every day! Read how appreciation is part of Schwab's culture. Learn more June 21, 2023 Culture & Inclusion Story Hub -

How Schwab & TD Ameritrade are Integrating Their Cultures in a Virtual World | Schwab Jobs Learn more about the ways in which Schwab and TD Ameritrade are joining cultures through a Culture Club that explores making connections, employee experience themes, sharing ideas, and empowering our employees to grow together. Learn more June 21, 2023 Life at Schwab Story Hub

How Schwab & TD Ameritrade are Integrating Their Cultures in a Virtual World | Schwab Jobs Learn more about the ways in which Schwab and TD Ameritrade are joining cultures through a Culture Club that explores making connections, employee experience themes, sharing ideas, and empowering our employees to grow together. Learn more June 21, 2023 Life at Schwab Story Hub -

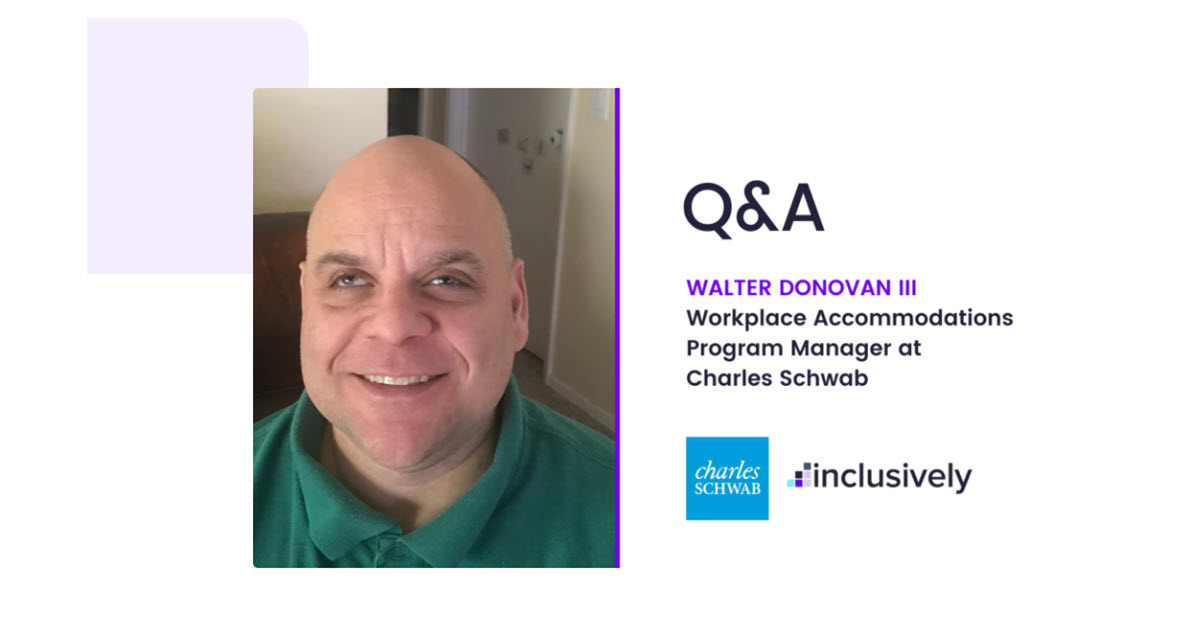

Disability Advocacy in the Workplace: Schwab's Partnership with Inclusively Learn more about Schwab's partnership with Inclusively in an interview with Walt D., III. Learn more June 21, 2023 Culture & Inclusion Story Hub

Disability Advocacy in the Workplace: Schwab's Partnership with Inclusively Learn more about Schwab's partnership with Inclusively in an interview with Walt D., III. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Latinas in Tech: Overcoming Imposter Syndrome Read Jessica's story on her career progression in tech as a Latina. Find out how to build confidence, gain tech experience, & overcome imposter syndrome! Learn more June 21, 2023 Culture & Inclusion Story Hub Technology Related Content

Latinas in Tech: Overcoming Imposter Syndrome Read Jessica's story on her career progression in tech as a Latina. Find out how to build confidence, gain tech experience, & overcome imposter syndrome! Learn more June 21, 2023 Culture & Inclusion Story Hub Technology Related Content -

Mental Health at Work: Creating Community & Navigating Neurodiversity in the Workplace | Schwab Jobs As a continuation in a series around balancing mental health in the workplace, read on to learn more about Sean C.'s journey of being diagnosed with ADHD and how that has enlightened, enhanced, and altered his life, both personally and professionally. Learn more June 21, 2023 Culture & Inclusion Story Hub

Mental Health at Work: Creating Community & Navigating Neurodiversity in the Workplace | Schwab Jobs As a continuation in a series around balancing mental health in the workplace, read on to learn more about Sean C.'s journey of being diagnosed with ADHD and how that has enlightened, enhanced, and altered his life, both personally and professionally. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Military Spouse Appreciation Day: An Interview with Lauren C. | Schwab Jobs Military spouses play an important role in the military community. Read how Lauren unleashed her career at Schwab and supported her family while her husband was in the Navy. Learn more June 21, 2023 Culture & Inclusion Story Hub

Military Spouse Appreciation Day: An Interview with Lauren C. | Schwab Jobs Military spouses play an important role in the military community. Read how Lauren unleashed her career at Schwab and supported her family while her husband was in the Navy. Learn more June 21, 2023 Culture & Inclusion Story Hub -

We're Better Together: Schwab's Commitment to Giving Back | Schwab Jobs Service is at the heart of Schwab’s culture. Whether it’s serving our own Schwabbies, clients, or the communities around us, we value the opportunity to give back to those we care about. Hear from our Outstanding Community Service Award winners on how they used their Time to Volunteer benefit! Learn more June 21, 2023 Culture & Inclusion Story Hub

We're Better Together: Schwab's Commitment to Giving Back | Schwab Jobs Service is at the heart of Schwab’s culture. Whether it’s serving our own Schwabbies, clients, or the communities around us, we value the opportunity to give back to those we care about. Hear from our Outstanding Community Service Award winners on how they used their Time to Volunteer benefit! Learn more June 21, 2023 Culture & Inclusion Story Hub -

Supporting Military Veterans at Schwab: Robert W.’s Passion for Community & Engagement | Schwab Jobs As a part of National Military Appreciation Month, and as a continuation in our series on balancing mental health in the workplace, Robert W. shares the impact Military Veterans Network has made on his career, how Schwab supports veterans, and the invaluable sense of community & family the ERG gives to its members. Learn more June 21, 2023 Culture & Inclusion Story Hub

Supporting Military Veterans at Schwab: Robert W.’s Passion for Community & Engagement | Schwab Jobs As a part of National Military Appreciation Month, and as a continuation in our series on balancing mental health in the workplace, Robert W. shares the impact Military Veterans Network has made on his career, how Schwab supports veterans, and the invaluable sense of community & family the ERG gives to its members. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Unleash the possibilities: Women in Tech at Schwab As Schwab continues to grow as a firm, so does our technology space. We sat down with two amazing women in tech, to ask how they’ve seen this industry grow at Schwab and what's their favorite part about being a Schwabbie! Learn more June 21, 2023 Culture & Inclusion Story Hub Technology Related Content

Unleash the possibilities: Women in Tech at Schwab As Schwab continues to grow as a firm, so does our technology space. We sat down with two amazing women in tech, to ask how they’ve seen this industry grow at Schwab and what's their favorite part about being a Schwabbie! Learn more June 21, 2023 Culture & Inclusion Story Hub Technology Related Content -

Women in Tech: Mentorship & Advocacy in Security | Schwab Jobs Learn how Falycia, a U.S. Marine and technology enthusiast, got her start in security at Schwab. Learn more June 21, 2023 Culture & Inclusion Story Hub

Women in Tech: Mentorship & Advocacy in Security | Schwab Jobs Learn how Falycia, a U.S. Marine and technology enthusiast, got her start in security at Schwab. Learn more June 21, 2023 Culture & Inclusion Story Hub -

Our List of the Best Schwab Blogs Highlighting the best Schwab stories on employee resource groups, benefits, early talent programs, & more. Find out our most popular Schwab blogs! Learn more June 21, 2023 Life at Schwab Story Hub

Our List of the Best Schwab Blogs Highlighting the best Schwab stories on employee resource groups, benefits, early talent programs, & more. Find out our most popular Schwab blogs! Learn more June 21, 2023 Life at Schwab Story Hub -

Career Changers: Mother and Daughter Financial Services Duo | Schwab Jobs Cindy and Randi M. made a career switch to financial services, becoming a mother & daughter duo at Schwab. Learn more June 21, 2023

Career Changers: Mother and Daughter Financial Services Duo | Schwab Jobs Cindy and Randi M. made a career switch to financial services, becoming a mother & daughter duo at Schwab. Learn more June 21, 2023 -

A Culture of Service: Going Above and Beyond with Matt L. | Schwab Jobs Our Client Service & Support professionals enact positive change in the lives of our clients each and every day. For Matt L., it was only 4 months in his new position at Schwab that he was able to reflect on what bringing this purpose to life meant. Read on to find out how Matt helps his clients Own Their Tomorrow! Learn more June 21, 2023

A Culture of Service: Going Above and Beyond with Matt L. | Schwab Jobs Our Client Service & Support professionals enact positive change in the lives of our clients each and every day. For Matt L., it was only 4 months in his new position at Schwab that he was able to reflect on what bringing this purpose to life meant. Read on to find out how Matt helps his clients Own Their Tomorrow! Learn more June 21, 2023 -

Pursuing the American Dream | Schwab Jobs Read how Gio went from Bolivia to the United States and worked her way up from an entry level job to management in Financial Services at Schwab's Westlake campus. Learn more June 21, 2023 Career Development Story Hub

Pursuing the American Dream | Schwab Jobs Read how Gio went from Bolivia to the United States and worked her way up from an entry level job to management in Financial Services at Schwab's Westlake campus. Learn more June 21, 2023 Career Development Story Hub -

Finding Your Why: Hanna W., Brokerage Service Representative Read about Hanna W.'s journey to obtaining her Series 7 and becoming a Brokerage Service Representative Learn more June 21, 2023 Service Related Content

Finding Your Why: Hanna W., Brokerage Service Representative Read about Hanna W.'s journey to obtaining her Series 7 and becoming a Brokerage Service Representative Learn more June 21, 2023 Service Related Content -

How to Advance your Career at Schwab: Exploring Alex’s Journey Education reimbursement is a benefit offered by Schwab, which aims to provide Schwabbies with opportunities to develop their skills and advance in their career. For Schwabbie, Alex B., the education reimbursement benefit helped him reach his goal of becoming a Chartered Financial Analyst! Learn more June 21, 2023 Benefits Story Hub

How to Advance your Career at Schwab: Exploring Alex’s Journey Education reimbursement is a benefit offered by Schwab, which aims to provide Schwabbies with opportunities to develop their skills and advance in their career. For Schwabbie, Alex B., the education reimbursement benefit helped him reach his goal of becoming a Chartered Financial Analyst! Learn more June 21, 2023 Benefits Story Hub -

How to Advance Your Career at Schwab: Exploring Monica’s Journey in Pursuing her MBA | Schwab Jobs Among Schwab’s many core values and beliefs, the desire to further one’s self in their career and ignite their passion is one that many Schwabbies have in common. Read on to find out how Monica L. advanced her career at Schwab using the tuition reimbursement benefit to earn her Masters of Business Administration! Learn more June 21, 2023 Benefits Story Hub

How to Advance Your Career at Schwab: Exploring Monica’s Journey in Pursuing her MBA | Schwab Jobs Among Schwab’s many core values and beliefs, the desire to further one’s self in their career and ignite their passion is one that many Schwabbies have in common. Read on to find out how Monica L. advanced her career at Schwab using the tuition reimbursement benefit to earn her Masters of Business Administration! Learn more June 21, 2023 Benefits Story Hub -

How to Prevent Burn-out: 7 Tips for Work and Home As a firm that supports work-life balance and the overall health of our employees, we’d like to provide some tips and advice that can help reduce the feeling of burn-out and get you back to a place where you feel energized and productive at work! Learn more June 21, 2023 Career Development Story Hub

How to Prevent Burn-out: 7 Tips for Work and Home As a firm that supports work-life balance and the overall health of our employees, we’d like to provide some tips and advice that can help reduce the feeling of burn-out and get you back to a place where you feel energized and productive at work! Learn more June 21, 2023 Career Development Story Hub -

How to Become a Financial Consultant: Jordan’s Journey Through the FCA Program | Schwab Jobs Are you a recent graduate looking to pursue a purposeful & meaningful career in Finance? If so, Schwab’s Financial Consultant Academy may be the perfect place for you. Read on to see how Jordan is paving his way to becoming a Financial Consultant and the professional development opportunities he's had in this program! Learn more June 21, 2023 Life at Schwab Story Hub

How to Become a Financial Consultant: Jordan’s Journey Through the FCA Program | Schwab Jobs Are you a recent graduate looking to pursue a purposeful & meaningful career in Finance? If so, Schwab’s Financial Consultant Academy may be the perfect place for you. Read on to see how Jordan is paving his way to becoming a Financial Consultant and the professional development opportunities he's had in this program! Learn more June 21, 2023 Life at Schwab Story Hub -

A Financial Service Professional's Passion for Community, Teaching, & Service Kayla R. is currently a Financial Service Representative helping clients reach their financial goals. Learn how she is paving the way for others with her passion for customer service! Learn more June 21, 2023 Career Development Story Hub Service Related Content

A Financial Service Professional's Passion for Community, Teaching, & Service Kayla R. is currently a Financial Service Representative helping clients reach their financial goals. Learn how she is paving the way for others with her passion for customer service! Learn more June 21, 2023 Career Development Story Hub Service Related Content -

How Schwab is Investing in Early Talent | Schwab Jobs Learn about how Charles Schwab is investing in the growth and opportunity of early talent. Learn more June 21, 2023

How Schwab is Investing in Early Talent | Schwab Jobs Learn about how Charles Schwab is investing in the growth and opportunity of early talent. Learn more June 21, 2023 -

Kat T.: Neurodiverse Employee Empowerment | Schwab Jobs Learn how Neurodiversity at Work program hire Kat T. feels empowered to thrive at Schwab. Learn more June 21, 2023

Kat T.: Neurodiverse Employee Empowerment | Schwab Jobs Learn how Neurodiversity at Work program hire Kat T. feels empowered to thrive at Schwab. Learn more June 21, 2023 -

Empowering Success: Finding the Support to Pass Series 7 at Charles Schwab | Schwab Jobs Learn how Schwab supported Jessica M. on her journey to passing her Series 7 exam and becoming a full-time Schwabbie. Learn more June 21, 2023 Career Development Story Hub

Empowering Success: Finding the Support to Pass Series 7 at Charles Schwab | Schwab Jobs Learn how Schwab supported Jessica M. on her journey to passing her Series 7 exam and becoming a full-time Schwabbie. Learn more June 21, 2023 Career Development Story Hub -

ERG Spotlight: Black Professionals at Charles Schwab | Schwab Jobs Learn how BPACS (Black Professionals at Charles Schwab) strives to advocate, educate, and celebrate the Black community. Learn more June 21, 2023

ERG Spotlight: Black Professionals at Charles Schwab | Schwab Jobs Learn how BPACS (Black Professionals at Charles Schwab) strives to advocate, educate, and celebrate the Black community. Learn more June 21, 2023 -

Career Spotlight: Reentering the Workforce with Donna M. Learn how Donna M., a Director in Trading Education, built a successful career at Schwab after being a stay-at-home mom for 11 years. Learn more June 21, 2023

Career Spotlight: Reentering the Workforce with Donna M. Learn how Donna M., a Director in Trading Education, built a successful career at Schwab after being a stay-at-home mom for 11 years. Learn more June 21, 2023 -

Time Flies When You’re Having Fun: Schwabbie Sabbatical Stories For every 5 years of employment at Schwab, our employees earn a sabbatical benefit. Read what 3 Schwabbies did during their prolonged vacations. Learn more June 21, 2023 Benefits Story Hub

Time Flies When You’re Having Fun: Schwabbie Sabbatical Stories For every 5 years of employment at Schwab, our employees earn a sabbatical benefit. Read what 3 Schwabbies did during their prolonged vacations. Learn more June 21, 2023 Benefits Story Hub -

Taking a Leap of Faith: Katerina’s Story of Resilience and New Beginnings in America We believe in the power of Owning Your Tomorrow. For Katerina, owning her tomorrow included following her grandfather’s footsteps in emigrating to the U.S. and leaving the life she knew behind to create an entirely new one in America. Read on to find out more about Katerina's journey and her career at Schwab! Learn more June 21, 2023 Career Development Story Hub

Taking a Leap of Faith: Katerina’s Story of Resilience and New Beginnings in America We believe in the power of Owning Your Tomorrow. For Katerina, owning her tomorrow included following her grandfather’s footsteps in emigrating to the U.S. and leaving the life she knew behind to create an entirely new one in America. Read on to find out more about Katerina's journey and her career at Schwab! Learn more June 21, 2023 Career Development Story Hub -

How Employee Resource Groups Support Diversity | Schwab Jobs Learn how Geraldine uses Schwab's Employee Resource Groups (ERGs) to find diversity & inclusion, engagement, role models, and development opportunities. Learn more June 21, 2023

How Employee Resource Groups Support Diversity | Schwab Jobs Learn how Geraldine uses Schwab's Employee Resource Groups (ERGs) to find diversity & inclusion, engagement, role models, and development opportunities. Learn more June 21, 2023 -

Schwab ERGs: How WINS Empowers Women in Finance | SchwabJobs Read how WINS National Co-Chair Karen B. works to build a space for women in finance that fosters career development, networking, and a sense of community at Charles Schwab. Learn more June 21, 2023

Schwab ERGs: How WINS Empowers Women in Finance | SchwabJobs Read how WINS National Co-Chair Karen B. works to build a space for women in finance that fosters career development, networking, and a sense of community at Charles Schwab. Learn more June 21, 2023 -

A Culture of Support: Caryn V.'s Journey to Recovery Learn how Caryn V. was supported by Schwabbies while recovering from a serious injury Learn more June 21, 2023

A Culture of Support: Caryn V.'s Journey to Recovery Learn how Caryn V. was supported by Schwabbies while recovering from a serious injury Learn more June 21, 2023 -

Career Spotlight: Dani N.'s Career Development Journey | Schwab Jobs Learn how Dani used Schwab's professional development opportunities to advance in her career. Learn more June 21, 2023

Career Spotlight: Dani N.'s Career Development Journey | Schwab Jobs Learn how Dani used Schwab's professional development opportunities to advance in her career. Learn more June 21, 2023 -

Richard's Journey: The Power of Financial Literacy Learn how Richard discovered the power of financial literacy through mentorship, training, and by joining Schwab's Financial Consultant Academy. Learn more June 21, 2023 Career Development Story Hub Retail Related Content

Richard's Journey: The Power of Financial Literacy Learn how Richard discovered the power of financial literacy through mentorship, training, and by joining Schwab's Financial Consultant Academy. Learn more June 21, 2023 Career Development Story Hub Retail Related Content -

Rhiannon's Journey to a Career in Financial Services | Schwab Jobs Have you ever transitioned from one industry to another in your career journey? For Rhiannon C., she took her degree in political science and landed a job in Service at TD Ameritrade. Find out how her passion for helping others led her to a career in Financial Services! Learn more June 21, 2023 Career Development Story Hub

Rhiannon's Journey to a Career in Financial Services | Schwab Jobs Have you ever transitioned from one industry to another in your career journey? For Rhiannon C., she took her degree in political science and landed a job in Service at TD Ameritrade. Find out how her passion for helping others led her to a career in Financial Services! Learn more June 21, 2023 Career Development Story Hub -

New Year, New Career? Tips to Improve Your Career Path | Schwab Jobs Whether you’re trying to increase your pay, find new opportunities, develop your current career path, or just find something different – these tips will help you achieve your goals. Learn more June 21, 2023 Career Development Story Hub

New Year, New Career? Tips to Improve Your Career Path | Schwab Jobs Whether you’re trying to increase your pay, find new opportunities, develop your current career path, or just find something different – these tips will help you achieve your goals. Learn more June 21, 2023 Career Development Story Hub -

Career Spotlight: Navit J. on Mentorship and Career Growth | Schwab Jobs Learn How Navit J., a Managing Director in Corporate Compliance, used mentorship to grow her career. Learn more June 21, 2023

Career Spotlight: Navit J. on Mentorship and Career Growth | Schwab Jobs Learn How Navit J., a Managing Director in Corporate Compliance, used mentorship to grow her career. Learn more June 21, 2023 -

Lea's Path to Leadership in Financial Services & Tech Learn how Lea S. built an impactful career as a woman in the digital space at Schwab. Learn more June 21, 2023 Career Development Story Hub

Lea's Path to Leadership in Financial Services & Tech Learn how Lea S. built an impactful career as a woman in the digital space at Schwab. Learn more June 21, 2023 Career Development Story Hub -

Build a Career at Schwab: Hear Randy's Story Randy started off as an intern in Schwab's Intern Academy. Little did he know, his career path would twist and turn as he progressed his career as a full-time Schwabbie. Learn more June 21, 2023 Career Development Story Hub Service Related Content

Build a Career at Schwab: Hear Randy's Story Randy started off as an intern in Schwab's Intern Academy. Little did he know, his career path would twist and turn as he progressed his career as a full-time Schwabbie. Learn more June 21, 2023 Career Development Story Hub Service Related Content -

Build a Career at Schwab: Hear Taji's Story | Schwab Jobs Taji started off as an intern at Schwab and since then, has advanced from an entry-level position to the current role she is in today. Read on to find out more about her experience moving up in the company! Learn more June 21, 2023 Career Development Story Hub

Build a Career at Schwab: Hear Taji's Story | Schwab Jobs Taji started off as an intern at Schwab and since then, has advanced from an entry-level position to the current role she is in today. Read on to find out more about her experience moving up in the company! Learn more June 21, 2023 Career Development Story Hub -

Build a Career at Schwab: Hear Matthew's Story | Schwab Jobs They say time flies when you're having fun! For Schwabbie Matthew B., it's been 5 years since his start as an intern back in 2014. Read on to find out how Matthew's exciting career journey started in the hospitality industry and ended up in a software engineering role! Learn more June 21, 2023 Career Development Story Hub

Build a Career at Schwab: Hear Matthew's Story | Schwab Jobs They say time flies when you're having fun! For Schwabbie Matthew B., it's been 5 years since his start as an intern back in 2014. Read on to find out how Matthew's exciting career journey started in the hospitality industry and ended up in a software engineering role! Learn more June 21, 2023 Career Development Story Hub -

AAPI Heritage and Inclusion with Michelle and Yang | Schwab Jobs APINS ERG co-chairs discuss celebrating AAPI Heritage Month and empowering AAPI voices in finance. Learn more June 21, 2023

AAPI Heritage and Inclusion with Michelle and Yang | Schwab Jobs APINS ERG co-chairs discuss celebrating AAPI Heritage Month and empowering AAPI voices in finance. Learn more June 21, 2023 -

How Our WINS Allyship Program Creates Connections Allyship is a big part of how we support one another! Our WINS (Women's Interactive Network at Schwab) Allyship program connects women members to male allies, giving them the chance to bond, grow their knowledge, and build lasting relationships. Read Jessica C. and Ty G.’s experiences with the program, and how allyship continues to empower other Schwabbies to thrive! Learn more March 06, 2024 Career Development Culture & Inclusion Story Hub

How Our WINS Allyship Program Creates Connections Allyship is a big part of how we support one another! Our WINS (Women's Interactive Network at Schwab) Allyship program connects women members to male allies, giving them the chance to bond, grow their knowledge, and build lasting relationships. Read Jessica C. and Ty G.’s experiences with the program, and how allyship continues to empower other Schwabbies to thrive! Learn more March 06, 2024 Career Development Culture & Inclusion Story Hub -

Learn more January 18, 2024

Learn more January 18, 2024 -

A Sabbatical Journey: An Analytics Consultant’s Exploration of Europe and Work-Life Balance at Schwab. Learn how a data analytics consultant utilized his Schwab sabbatical benefit to backpack Europe and enjoy some work-life balance. Learn more March 19, 2024 Benefits Story Hub Home Page Related Content

A Sabbatical Journey: An Analytics Consultant’s Exploration of Europe and Work-Life Balance at Schwab. Learn how a data analytics consultant utilized his Schwab sabbatical benefit to backpack Europe and enjoy some work-life balance. Learn more March 19, 2024 Benefits Story Hub Home Page Related Content -

Having Fun and Transforming Lives: Tiara Thursday Read how our Omaha Advisor Services Estates team created “Tiara Thursday,” a creative way to bond, laugh, and symbolize their commitment to enjoying the important work they do. Learn more March 25, 2024 Life at Schwab Culture & Inclusion Story Hub

Having Fun and Transforming Lives: Tiara Thursday Read how our Omaha Advisor Services Estates team created “Tiara Thursday,” a creative way to bond, laugh, and symbolize their commitment to enjoying the important work they do. Learn more March 25, 2024 Life at Schwab Culture & Inclusion Story Hub -

GLOBE: A place to belong and understand other cultures It’s Celebrate Diversity Month, a time where we highlight the cultures that make up our community at Schwab. Learn how our GLOBE Employee Resource Group brings many different cultures together to create shared understanding and celebrate our differences. Learn more April 03, 2024 Culture & Inclusion

GLOBE: A place to belong and understand other cultures It’s Celebrate Diversity Month, a time where we highlight the cultures that make up our community at Schwab. Learn how our GLOBE Employee Resource Group brings many different cultures together to create shared understanding and celebrate our differences. Learn more April 03, 2024 Culture & Inclusion -

Prioritizing Career Development with Pro Day To support the development of our people, we got together to dive deep into areas we're passionate about, giving us time to hone our skills and explore new avenues for growth in our roles and careers. Read about how we used Pro Day to elevate ourselves and each other! Learn more April 17, 2024 Career Development Story Hub

Prioritizing Career Development with Pro Day To support the development of our people, we got together to dive deep into areas we're passionate about, giving us time to hone our skills and explore new avenues for growth in our roles and careers. Read about how we used Pro Day to elevate ourselves and each other! Learn more April 17, 2024 Career Development Story Hub -

ERG Spotlight: Asian Professionals Inclusion Network APINS | Schwab Jobs Read how the APINS community uses members' diverse experiences to promote awareness and inclusion. Learn more June 21, 2023

ERG Spotlight: Asian Professionals Inclusion Network APINS | Schwab Jobs Read how the APINS community uses members' diverse experiences to promote awareness and inclusion. Learn more June 21, 2023 -

From Law Enforcement to Financial Services: Stacie Shares Her Career Journey At Schwab, we welcome individuals from all different backgrounds and career fields. For Stacie, her career journey as a Law Enforcement officer ultimately led her to her current role in financial services. Learn more June 21, 2023 Career Development Story Hub

From Law Enforcement to Financial Services: Stacie Shares Her Career Journey At Schwab, we welcome individuals from all different backgrounds and career fields. For Stacie, her career journey as a Law Enforcement officer ultimately led her to her current role in financial services. Learn more June 21, 2023 Career Development Story Hub -

Teacher Builds New Career in Data Analytics Learn how Rebecca, a former math teacher, found career growth and new opportunities within Charles Schwab's Data Analytics and Insights team. Learn more June 21, 2023 Career Development Story Hub

Teacher Builds New Career in Data Analytics Learn how Rebecca, a former math teacher, found career growth and new opportunities within Charles Schwab's Data Analytics and Insights team. Learn more June 21, 2023 Career Development Story Hub -

Career Changer Leads to Long Term Success at Schwab | Schwab Jobs Many Schwabbies come from different backgrounds and prior career fields. For Kris A, this current Schwabbie made a full 360 with starting off in the finance industry, to becoming a personal property appraiser, and then returning back to finance with Schwab. Read more about her journey below! Learn more June 21, 2023 Career Development Story Hub

Career Changer Leads to Long Term Success at Schwab | Schwab Jobs Many Schwabbies come from different backgrounds and prior career fields. For Kris A, this current Schwabbie made a full 360 with starting off in the finance industry, to becoming a personal property appraiser, and then returning back to finance with Schwab. Read more about her journey below! Learn more June 21, 2023 Career Development Story Hub -

Jannibah's Schwab Story Jannibah was on the hunt for a new career and with a background in non-profit and communications, a job in finance never crossed her mind. Learn how she came to find Schwab and why a career in financial services was the right choice for her! Learn more June 21, 2023 Career Development Story Hub

Jannibah's Schwab Story Jannibah was on the hunt for a new career and with a background in non-profit and communications, a job in finance never crossed her mind. Learn how she came to find Schwab and why a career in financial services was the right choice for her! Learn more June 21, 2023 Career Development Story Hub -

Katie's Schwab Story Katie's journey to Schwab started from her background in the non-profit space. As she earned her Ph.D., she soon realized that becoming a university professor wasn't for her. Instead, Katie took her passion for improving workplace culture to a new role at Schwab, which aligned with her personal values. Learn more June 21, 2023 Career Development Story Hub

Katie's Schwab Story Katie's journey to Schwab started from her background in the non-profit space. As she earned her Ph.D., she soon realized that becoming a university professor wasn't for her. Instead, Katie took her passion for improving workplace culture to a new role at Schwab, which aligned with her personal values. Learn more June 21, 2023 Career Development Story Hub -

How to Have a Successful Job Hunt as a Veteran: 5 Tips from a Schwab Recruiter Transitioning from the military to civilian life can be difficult. For those Veterans who are looking at their next career opportunity at Schwab or beyond, this article can help with how to best prepare for your new career! Read on to find how you can have a successful job hunt as a Veteran. Learn more June 21, 2023 Career Development Story Hub

How to Have a Successful Job Hunt as a Veteran: 5 Tips from a Schwab Recruiter Transitioning from the military to civilian life can be difficult. For those Veterans who are looking at their next career opportunity at Schwab or beyond, this article can help with how to best prepare for your new career! Read on to find how you can have a successful job hunt as a Veteran. Learn more June 21, 2023 Career Development Story Hub -

Schwab Wealth Advisory: Wealth Advisor Career Opportunities Explore how the Schwab Wealth Advisory™ name change provides opportunities for financial service professionals and advisors looking to ignite their career. Learn more June 21, 2023 Career Development Story Hub

Schwab Wealth Advisory: Wealth Advisor Career Opportunities Explore how the Schwab Wealth Advisory™ name change provides opportunities for financial service professionals and advisors looking to ignite their career. Learn more June 21, 2023 Career Development Story Hub -

ERG Spotlight: Women’s Interactive Network WINS | Schwab Jobs Learn how our WINS employee resource group supports gender equality, diversity & inclusion, and women in finance through workshops, community outreach, and more. Learn more June 21, 2023

ERG Spotlight: Women’s Interactive Network WINS | Schwab Jobs Learn how our WINS employee resource group supports gender equality, diversity & inclusion, and women in finance through workshops, community outreach, and more. Learn more June 21, 2023 -

Culture of Service: Andrea R.'s Volunteering Experience | Schwab Jobs Learn how Andrea R., a V.P. Branch Manager, paired with Schwab to serve her community Learn more June 21, 2023

Culture of Service: Andrea R.'s Volunteering Experience | Schwab Jobs Learn how Andrea R., a V.P. Branch Manager, paired with Schwab to serve her community Learn more June 21, 2023 -

Juneteenth CeLiberation Learn about Black Professionals at Charles Schwab (BPACS) Employee Resource Group and how they are celebrating with events that promote togetherness through camaraderie, food, dance, fun and education. Learn more June 19, 2023 Culture & Inclusion

Juneteenth CeLiberation Learn about Black Professionals at Charles Schwab (BPACS) Employee Resource Group and how they are celebrating with events that promote togetherness through camaraderie, food, dance, fun and education. Learn more June 19, 2023 Culture & Inclusion